Singing the praises of odd numbers

23 Sep 2019

Annoy peer reviewers and create an inclusive numerical policy? It’s a win win for Dr Matthew Partridge in his quest to right a numerical wrong…

This article has exactly 513 words. I could have easily made it a nice round 520 but I purposely left one word out just make it an odd number.

My reason for this is to support the poor forgotten numbers of science.

In science we generate a lot of numbers in the form of data. The distribution of numbers in data is very much out of the hands of the researchers (or at least generally should be) and is entirely at the mercy of experimental setups, the fancy code that is used to run them, and the hamster's preferences that morning.

However, in science there are plenty of numbers we choose and when scientists choose numbers we choose both nice round ones and ones we've chosen before.

In experiments there are lot of number input by us the researchers, including number of repeats, time between measurements, etc. Often these are chosen as a sort of best practice or best estimate basis. I could very happily write an entire article on how to choose how many repeats to do (I get far too excited about it). But there is a lot of wiggle room as it is rarely critical, provided you are in the right vague range.

Without fail, when we do choose these numbers they are always nice comfortable numbers - like repeats of 2 or 10. You can't throw a virtual stone without finding a paper with someone increasing their concentrations in steps of 2 mg or power by 5 watts.

Which is fine I suppose. They are typically numbers that are easy to remember and easy to do simple maths on.

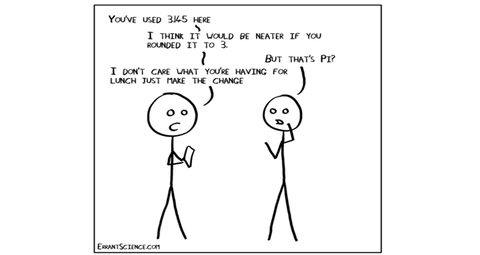

But, we've got very used to this – to the point where I've seen people criticised for choosing numbers outside of these 'nice' numbers. For example, the other week a researcher I know got comments back on their paper saying:

"Why did you choose an interval of 3.4? An interval of 3 or 4 would have been better. This data should be re-run for clarity"

There was no possible reason using round numbers would have changed anything about the experiment or the data. It was, at best, an aesthetic choice and one that was pretty arbitrary for data that was shown in a graph.

But even I've been guilty of this. I've wrinkled my nose at data that isn't nicely spaced with a simple doubling or multiple. I'm so used to it that I now expect everyone to have nice round values to everything. And in about 90% of cases for no logical reason it's just because that's the way everyone does it. Which is, of course, the worst reason.

So when doing your next experiments take samples every 11 seconds instead of 10 or run things in repeats of 21 instead of 20. You'll be helping correct a terrible imbalance and give a chance to all those rarely used numbers that feel all left out.

Also, you'll make it impossibly hard to look at your data and make estimations and drive reviewers slightly mad, which is obviously the best outcome.

Dr Matthew Partridge is a senior Research Fellow at the Optoelectronics Research Centre at the University of Southampton but describes himself as a biochemist who has accidentally ended up working with optical sensor systems.