A significantly different approach

20 Jul 2017 by Evoluted New Media

A recent study has thrown a spotlight on the very real problem of data fabrication. We caught up with Dr John Carlisle to find out about the computer programme he has developed to screen research papers and test the likelihood of the data being correct

Why did you choose to develop this screening tool? It wasn't designed as a screening tool. I was trying to analyse the trials of Yoshitaka Fujii – a Japanese researcher in anesthesiology, who in 2012 was found to have fabricated data in at least 183 scientific papers. Dr Fujii's work had been under suspicion for a long time, from before 2000. There were no official investigations of his work. I had become familiar with his trials 2003-6 when I was assembling a systematic review that included his papers. None had been retracted, so I was in a conundrum how to deal with trials that were thought to be 'wrong' but were not accompanied by an official investigation. By 2010 two anaesthetists (Scott Reuben and Joachim Boldt) were being investigated and their work retracted. It struck me as appropriate to ask that Dr Fujii's work be investigated. I got the answer "can you give some evidence that would convince people to investigate". So that is when I started work on the tool. Once developed it became apparent that one might use it for other people's work.

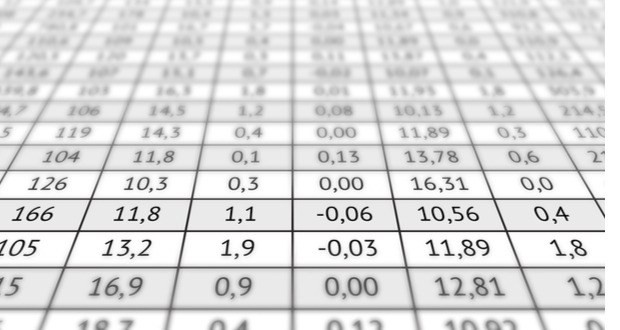

Is it just for random controlled trials (RCTs) and how does it work? Yes, it’s just for RCTs. It uses the same tests that are familiar to people trying to calculate the probability of a difference between groups i.e. independent t-test and ANOVA. In addition, it uses Monte Carlo simulations, which are particularly useful when the mean values of two (or more) groups are reported as the same, for instance, 172 cm and 172 cm. A t-test (and ANOVA) would give p = 1, which is nonsense, as it implies one couldn't have two groups with means closer together, but you could, for instance, 172.0 cm and 172.0 cm (or if the groups were smaller or the standard deviation is bigger). The t-test/ANOVA/ Monte Carlo results give a p-value to each baseline variable, for instance, height, age, weight. Then the tool combines the p-values to give a single p-value for the whole RCT.

Previously, this tool has been applied to studies in the journal Anaesthesia, what influenced you to apply it to a wider range of journals? I was interested to find out whether anaesthetic studies are more likely to contain erroneous data compared with studies in other disciplines. Anaesthetists don't have a very good track record: 3 of the 15 biomedical researchers with the most retractions are anaesthetists.

Are we looking at human error or deliberate fraud? That is the question. One can't tell, although one might look for other signals that accompany made-up data. Within a single trial, this can include repetition of numbers, digit preference, proportions that are incompatible with rates e.g. 4 of 12 women (38%), non-uniform distributions of trailing digits and image manipulation. For comparison of trials, factors previously mentioned would apply as well as repetition of numbers, rates, graphs etc that appeared in other trials.

Is statistics an underdeveloped skill in science? Numeracy is the key to science. One extreme opinion is that everything is mathematics. Even if one was not that strongly-opinionated I think many people appreciate that they would understand more (and avoid error) if they understood numbers better, myself included. I think training without context is fairly useless: you go on a course, you learn, you forget, you never really understood. Training should be in the context of what you do – we'd need mentoring within the workplace.

You’ve released the Carlisle tool as freeware – what’s next? Correct errors: I hope that people will test it, find out where it is wrong, tell me and then we can improve it. A small addition would be to determine whether we can analyse continuous data in RCTs that are presented as a summary measure other than mean (SD or SEM), for instance, median (interquartile range). Then add functions to analyse categorical data. We could also analyse other numerical characteristics, such as digit preference. A natural development would be an app and web-based tool. And then associate it with software that can work through a trial and pull out numbers from tables and so on, i.e. automate it. Personally, I'll look at authors of more than one RCT with a small p-value and search for trials I didn't survey that they've authored, to see if any authors or group of authors have consistently generated unlikely data.