The crystal clarity of deep learning

3 Oct 2017 by Evoluted New Media

Sean McGee explains how deep machine learning could finally prove to be the key that opens up automated analysis of protein crystals

Crystallography is the researcher’s tool of choice to reveal protein structure – but it can be slow and laborious. Sean McGee explains how deep machine learning could finally prove to be the key that opens up automated analysis of protein crystals

Crystallography has long been an indispensable tool for elucidating the structure of a wide variety of molecules. This is especially true of biological macromolecules, which has granted generations of researchers insight into how these large molecules work and interact.

For pharmaceutical research, understanding the structure of a protein assists researchers in the rational design of new small and large molecule therapeutics as they can tailor their candidates to more effectively interact with their target. However, while the number of complete structures in protein structure databases has grown exponentially over the past decade, there still are gaps in these databases due to the laborious nature of determining a de novo protein structure. Recent advances in technology have eased the burden somewhat by automating some of the setup work, but opportunities still remain for automation which can further improve later stage analysis.

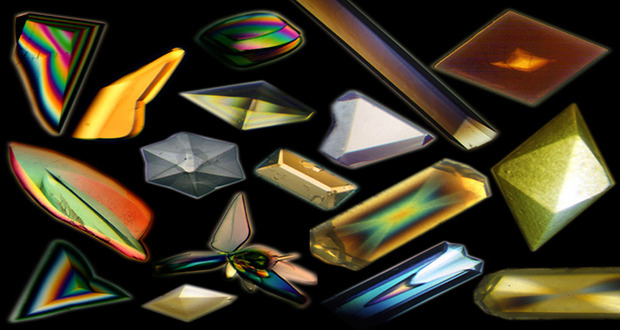

The key to conducting a crystallography study, of course, is to have a high quality crystal on hand; however, proteins, along with other biological macromolecules, normally do not exist in concentrated environments in nature. Their size, irregular surfaces and relative chemical complexity, coupled with their sensitivity to environmental factors such as pH, temperature or ionic strength, tend to not be conducive to forming the regular repeating structures of crystals. Additionally, the large amount of chemical variation between individual proteins often requires researchers to design a unique crystallisation process on a case-by-case basis. For example, proteins that are embedded within a cellular membrane in nature may require special additives such as detergents to ensure a stable crystal form, whereas another may require a very specific pH to ensure consistent packing. Crystallising such proteins becomes a laborious, time-consuming challenge.

Slow and steady

Since an individual crystallisation test tends to take between a few days and a few weeks and the rates of crystallisation can be as low as 2% depending on the setup1, researchers frequently attempt to simultaneously crystallise multiple fractions in a variety of different conditions from a single sample of purified protein to increase their chances of success. These groups of fractions are stored in crystallisation chambers to promote crystal formation and are monitored by a camera that snaps a picture of each individual fraction. These pictures must then be analysed to determine if a crystal has grown. Automating this image analysis has been an active area of research for decades. However, in the case of protein crystal image analysis, traditional approaches have fallen short. As a result, one person often has the unfortunate task of manually checking huge numbers of individual images to determine if a crystal has begun to grow, which can be smaller than a millimetre in size. This tedious process draws out analyses and keeps researchers from doing more value-added tasks.[caption id="attachment_64110" align="alignnone" width="620"] Artificial neural networks are just the beginning in terms of advancing machine learning[/caption]

Artificial neural networks are just the beginning in terms of advancing machine learning[/caption]

This then begs the question, what is causing the problem? Automating the image analysis process requires teaching a computer what constitutes a “crystal” within an image, so that it can subsequently recognise crystals in a new image. For example, to train a computer to recognise a cat, programmers would need to define what an edge would look like; define what combination of edges would produce a shape; and classify what combination of shapes constitute eyes, a nose, a mouth, ears and whiskers. In short, each requirement needs to be made up of a variety of algorithms to check off each criterion of what makes a cat a cat. Creating the algorithms themselves is a very complex and time-intensive process as the models are developed and refined and the computer is “taught.”

However, in the case of protein crystals this would only create an automated model for one crystal: variations in colour, size and shape would likely dictate that a new model be made on a case-by-case basis. Additionally, writing algorithms that defined what a “crystal” was could produce a number of false positives which would need to be manually rechecked, diminishing the model’s utility. To the cat example, a model may not recognise a cat if it is obscured by a hedge, or it may mistake a particularly small dog for a cat. Previous attempts at automated protein crystal image analysis produced false positives on an order of approximately 20%2. As a result, the algorithm-based approach is not economical enough to automate the image analysis process. While qualitatively classifying images as likely candidates for further research (i.e. a “yes/no” classification to discard some images earlier in the process) can help scientists3,4,5, moving beyond a semi-automated approach can benefit from modern machine “deep learning” technologies.Previous attempts at automated protein crystal image analysis produced false positives on an order of approximately 20%

Down deep

Deep learning is similar to traditional machine learning in that it employs a variety of algorithms to predict an output. However, deep learning differs in its structure, as it utilises a framework called an artificial neural network to carry out its analysis. Inspired by how neurons connect in a brain, artificial neural networks are a series of individual algorithms which receive weighted inputs from other “neurons” in the network and subsequently combine these weighted inputs to produce their own output. These neurons are arranged in a sequence of layers connected by “synapses,” which apply weights to the outputs from the prior layer and feed it into the next layer of neurons, and so forth. The key is that one neuron may connect to multiple neurons in the following layer by a number of synapses, with specific weights applied to each. The model is bookended by an input layer and an output layer; the layers in between, which perform most of the heavy lifting, are referred to as “hidden layers.”As the network “learns,” the weighting of each synapse is modified to increase the overall accuracy of the network. A basic neural network has three layers: an input layer, a hidden layer and an output layer, but a deep learning or deep belief network has multiple hidden layers with hundreds or thousands of synapses running in parallel. Consider again our picture of a cat: the input layer would chop the picture into a collection of tiles; the first layer would process these tiles and identify edges (its weighting for the next layer is how “sure” it is that an edge is an edge); the next layers would look at gradually larger features; finally, the output provides a “yes/no” decision on whether or not the “cat” is a cat, usually coupled with a percentage of how sure it is as a part of the output.A basic neural network has three layers: an input layer, a hidden layer and an output layer, but a deep learning or deep belief network has multiple hidden layers with hundreds or thousands of synapses running in parallel

Artificial neural networks are not new, but previously had limited application due to the large computational power needed to train a network. However, recent advances in deep learning have caused an explosion in their use – for example, in facial recognition, language processing, and computer vision. These technological advances, especially the use of graphics processing units, have greatly accelerated the adoption of neural networks.

In one famous example, Professor Andrew Ng trained a neural network to identify a picture of a cat – without telling it what a “cat” is – by having it browse 10 million random video thumbnails of cats on YouTube. The difference: instead of programming what an “edge” or a “paw” is, the machine learned these things by identifying patterns in the data. While a ground-breaking discovery in its own right, this presents a problem for protein crystal image analysis: often researchers don’t have 10 million images of protein crystal wells available to them. The solution – a variation on the theme of deep learning architecture called Generative Adversarial Networks (GANs).

The extended deep

GANs are an extension of the idea of deep learning in the sense that the computer continues to teach itself. In this case, however, GANs consist of two artificial neural networks: a generator network and a discriminator network. Both are trained from the same set of training data, with multiple parameters tied together (e.g. “cat – tabby;” “cat – calico;” “dog – null;” etc.). Following this initial round of training, the goal of the generator becomes to “fool” the discriminator by generating data that appears as if it is from the training set. The discriminator then adjusts its own weighting based on how it was tricked in the previous round of training, and the cycle continues.What this means is that it becomes theoretically possible to train good models with data sets that are much smaller than previously needed. While GANs are still a relatively new approach and still need work to reach mainstream adoption, this style of deep learning becomes more feasible for researchers in the biopharmaceutical space, which may only have thousands of data points on hand instead of millions. Additionally, as the models improve they may become better able to recognise more types of crystals without needing to define what a new crystal looks like. They will have taught themselves what the defining features of a “crystal” are.Additionally, as the models improve they may become better able to recognise more types of crystals without needing to define what a new crystal looks like

The question of how to automatically analyse images of protein crystals at an economic scale using software is a complex one, and scientists have wrestled with it for years. GANs, with their need for smaller data sets and ability to “self-teach” offer an exciting potential avenue for new innovations in the biopharmaceutical space and could provide the breakthrough needed to overcome current challenges.

And with scientists on the prowl for answers, one thing is certain: curiosity will not kill this cat.

Author: Sean McGee is Product Marketing Manager with Dassault Systèmes BIOVIA.

Author: Sean McGee is Product Marketing Manager with Dassault Systèmes BIOVIA.

References 1. Segelke BW. Journal of Crystal Growth. 2001;232:553–562 2. R Liu, Y Freund, and G Spraggon. “Image-based crystal detection: a machine-learning approach.” Acta Crystallographica Section D Biological Crystallography. 2008; 64:1187-95. 3. Zuk WM, Ward KB. Journal of crystal growth. 1991;110:148–155. 4. Cumbaa CA, Lauricella A, Fehrman N, Veatch C, Collins R, Luft J, De-Titta G, Jurisica I. Acta Crystallographica Section D: Biological Crystallography. 2003;59:1619–1627. 5. Po MJ, Laine AF. Leveraging genetic algorithm and neural network in automated protein crystal recognition. Engineering in Medicine and Biology Society, 2008. EMBS 2008; 30th Annual International Conference of the IEEE; 2008. pp. 1926–1929.