Big, bold and complex…how to handle the data from the LHC restart?

2 Jun 2015 by qwqcdhhcbc.d qwqcdhhcbc.d

Reda Tafirout tells us what being a Tier-1 data centre entails and discusses how they prepared themselves for LHC’s recent switch on

Lead researcher at TRIUMF, Canada’s national research lab for nuclear and particle physics, Reda Tafirout tells us what being a Tier-1 data centre entails and discusses how they prepared themselves for LHC’s recent switch on

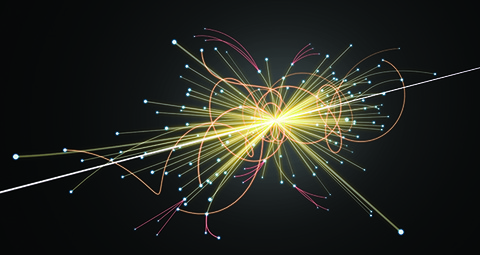

TRIUMF is at the vanguard of scientific innovation and discovery. Our scientists play a major role in the ATLAS experiment at the Large Hadron Collider (LHC) at CERN. The LHC collides proton beams together at the highest energy ever achieved in a laboratory to recreate the conditions just after the Big Bang; the collision debris is recorded by the ATLAS detector.

As big as a cathedral, ATLAS is like a giant mega-pixel digital camera that looks at millions of proton collisions per second. We filter millions of collision events to produce 1,000 events per second that are further stored for analysis. In order for the raw data to be useful for physicists, it must be reconstructed and calibrated like pixels from an electronic image.

To understand the data and to properly analyse it, we have to compare it to theoretical predictions, which in turn require simulations of billions of proton collisions. We are looking for incredibly rare events and that requires huge amounts of data acquisition, recording, processing and analysing.

When the LHC is colliding protons, the data produced is stored initially on disk at CERN’s Tier-0 centre. The disk capacity at CERN is not infinite, probably only enough space for a few days worth of data; so, it is important that data is farmed out to the Tier-1 centres quickly.

Once we have processed the raw data from ATLAS, the derived data needs to be stored and distributed to several sites around the world. ATLAS is presently storing close to 150 PBs of data on its entire distributed computing infrastructure (Grid).

Data is expensive; every megabyte of raw data produced by the LHC is precious. So, it is paramount that the data cannot be corrupted or lost and made easily accessible around-the-clock by the entire ATLAS community worldwide.

The data also needs to be distributed to over 3,000 scientists across 38 countries for analysis as quickly and efficiently as possible. There are 70 Tier-2 facilities around the world where most of this analysis takes place. The ATLAS project can call up on upwards of 150,000 computing cores for intensive simulations, analysis and modeling – enabling scientists to extract meaningful results.

For our most recent refresh project last summer, we wanted to consolidate storage with dCache, a system used to store and retrieve massive amounts of data that is distributed among a large number of heterogeneous server nodes under a single virtual file system. According to the dCache organisation, the LHC’s ability to produce a sustained stream of data in the order of 300MB/s is equivalent to creating a stack of CDs as high as the Eiffel Tower once a week! Most of the Tier-1 and Tier-2 centres use dCache for global data distribution.

DataDirect Networks (DDN) provided us with an embedded dCache solution via its DDN SFA12KXE. The tightly integrated solution runs embedded dCache virtual servers that ingest data from the ATLAS experiment and make it available to thousands of researchers globally.

Aside from ensuring tight integration with dCache, we needed to address concerns about CPU performance and latency. Data can come to us from any of our global locations, so we could encounter severe latency or bottlenecks, depending on where it is coming from and our ability to receive it. We needed to ensure high CPU usage efficiency as data is processed and analysed on a 24/7 basis.

We can receive and send data all around the world. Both around-the-clock WAN transfers, as well as internal data processing and distribution are handled with ease. When you consider that ATLAS uses 150,000 cores at any given time globally, the ability to handle the highest levels of intensive data processing and simulations is crucial.

DOING THE DATA DANCE In 2006 we signed a Memorandum of Understanding with CERN to be one of ten Tier-1 data centre providers directly supporting the ATLAS experiment. As a research-based organisation, our scientists want to be working in what is, arguably, the world’s largest physics project ever undertaken. In the same year, we received our funding to build our data centre and in 2007 we developed a proof-of-concept data centre design and went out to tender.

Paramount to our proposed design was reliability, density and performance – and those criteria continue to this day. Density and footprint are not so much of a concern for organisations like CERN – whose main data centre is housed in a 1450 m² room. For us, based in Vancouver, the cost of real estate is very high and we had limited floor space to work with and to build a large-scale data-intensive facility for 24/7 operations. As a result a key focus for us was finding a server environment with high density so that we could benefit from the resulting reduction in operational expense.

In 2007, we selected DDN, and an initial 1PB storage was purchased. Over the past five years, we have emerged on the international stage for our contribution to what is heralded by many as the most important discovery in particle physics research to date – the discovery of the Higgs boson.

Our storage environment has grown by an order of magnitude – from a dozen Terabytes in early 2007 to more than 7PBs in 2012, with a crucial portion entrusted with our storage partner DDN. If we are to keep pace with the growing demands and the expectations ATLAS has of its Tier-1 data centre providers, we need solutions with the highest levels of performance and density.

In 2009, 2012 and summer last year we made upgrades to our storage infrastructure. Using the DDN SFA system, we further reduced our storage running costs by five times. Taking our existing high-density storage environment from 21 4U servers requiring multiple racks to one with eight virtual nodes in half a rack; power consumption went from 25 to less than 7 kW in addition to major cost savings on cooling.

After a maintenance break of nearly two years, that saw a refit and almost the doubling of the LHC’s power, CERN restarted the LHC. British scientist Professor David Charlton, from the University of Birmingham, who heads the ATLAS experiment team, said: “We’re heading for unexplored territory. It’s going to be a new era for science.”

It took 50 years to find the Higgs boson and the LHC will be running for at least another 20 years. It just remains to be seen what interesting discoveries lie in the mountains of data that will soon be sent our way. With the LHC’s beam energy now increased to 13 tera-electron volts (TeV) it is perfectly reasonable to think it is possible the LHC will reveal new particles and phenomena. And, our scientists will once again be involved in trying to discover the new physics of the universe.